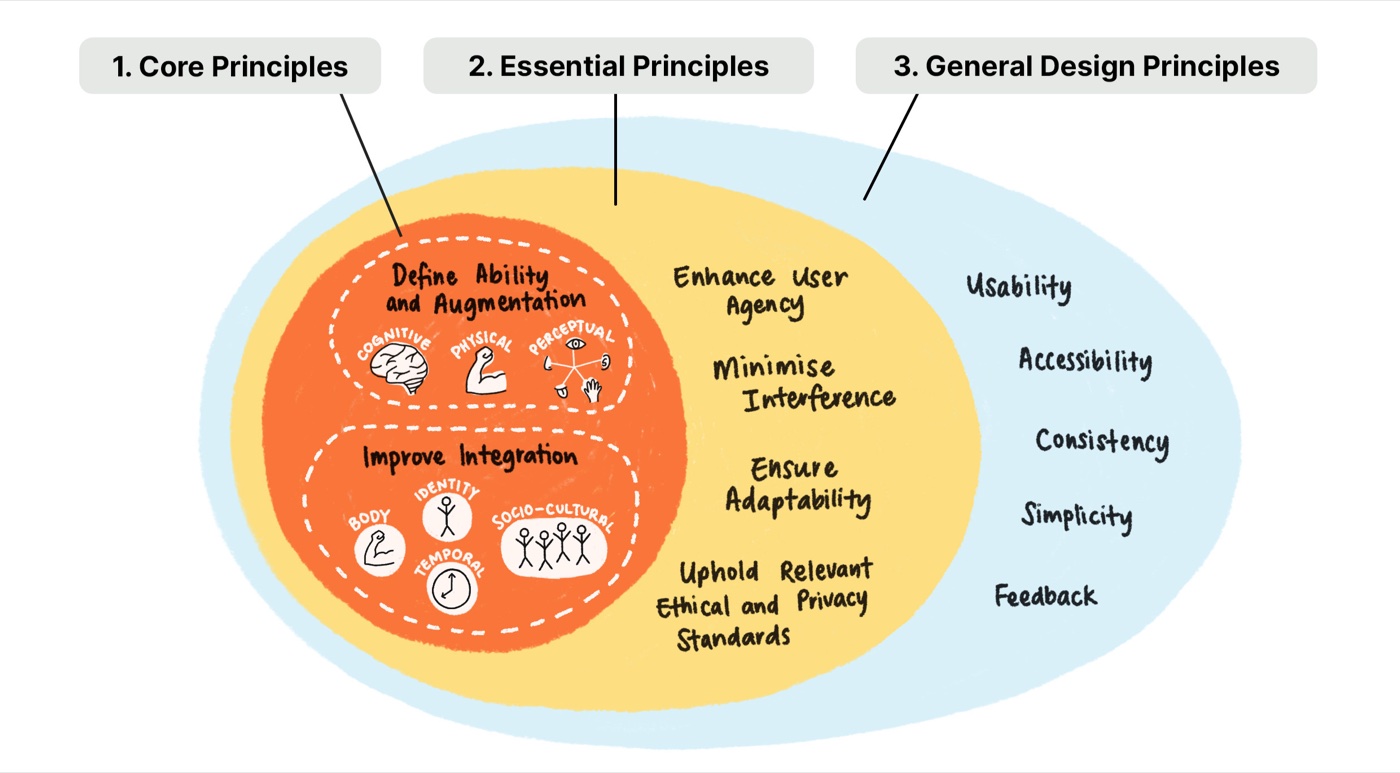

Technology as a seamless extension of human ability.

We believe that wearable computing and intelligent user interface systems offer tremendous opportunities to revolutionize our daily interactions. However, today's user interfaces still often fail to meet actual needs — especially for people with disabilities who rely on assistive technologies.

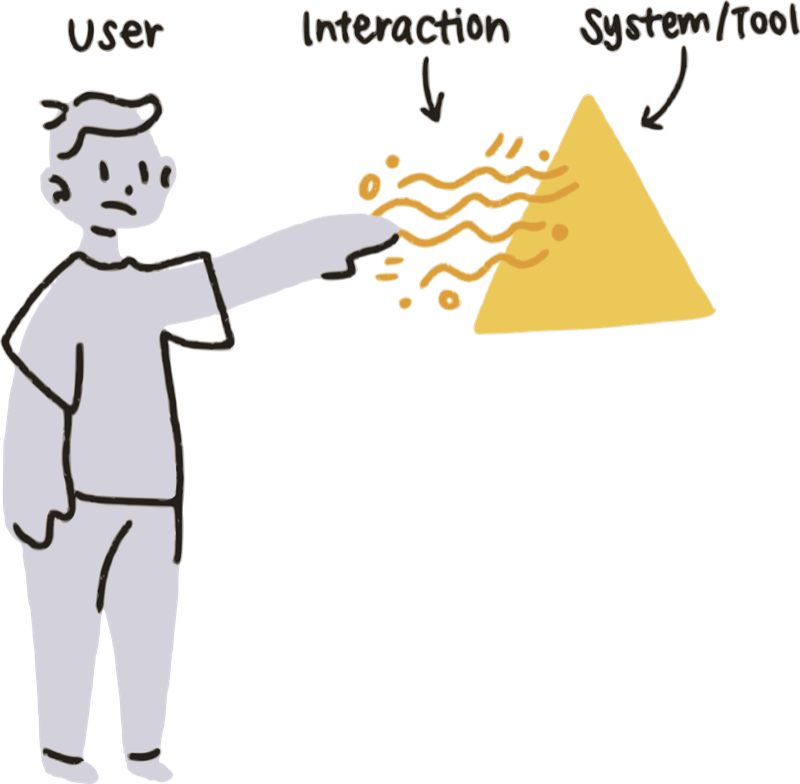

We advocate for a paradigm shift where technology is not seen as a separate tool, but as a seamless extension of the human body, mind, and identity. We call this holistic approach "Assistive Augmentation."

Read the full article