Mark Billinghurst

Prof. Mark Billinghurst has a wealth of knowledge and expertise in human-computer interface technology, particularly in the area of Augmented Reality (the overlay of three-dimensional images on the real world).

In 2002, the former HIT Lab US Research Associate completed his PhD in Electrical Engineering, at the University of Washington, under the supervision of Professor Thomas Furness III and Professor Linda Shapiro. As part of the research for his thesis titled Shared Space: Exploration in Collaborative Augmented Reality, Dr Billinghurst invented the Magic Book – an animated children’s book that comes to life when viewed through the lightweight head-mounted display (HMD).

Not surprisingly, Dr Billinghurst has achieved several accolades in recent years for his contribution to Human Interface Technology research. He was awarded a Discover Magazine Award in 2001, for Entertainment for creating the Magic Book technology. He was selected as one of eight leading New Zealand innovators and entrepreneurs to be showcased at the Carter Holt Harvey New Zealand Innovation Pavilion at the America’s Cup Village from November 2002 until March 2003. In 2004 he was nominated for a prestigious World Technology Network (WTN) World Technology Award in the education category and in 2005 he was appointed to the New Zealand Government’s Growth and Innovation Advisory Board.

Originally educated in New Zealand, Dr Billinghurst is a two-time graduate of Waikato University where he completed a BCMS (Bachelor of Computing and Mathematical Science)(first class honours) in 1990 and a Master of Philosophy (Applied Mathematics & Physics) in 1992.

Research interests: Dr. Billinghurst’s research focuses primarily on advanced 3D user interfaces such as:

Wearable Computing – Spatial and collaborative interfaces for small wearable computers. These interfaces address the idea of what is possible when you merge ubiquitous computing and communications on the body. Shared Space – An interface that demonstrates how augmented reality, the overlaying of virtual objects on the real world, can radically enhance face-face and remote collaboration. Multimodal Input – Combining natural language and artificial intelligence techniques to allow human-computer interaction with an intuitive mix of voice, gesture, speech, gaze and body motion.

-

Augmented Tabletop Interaction as an Assistive Tool: Tidd’s Role in Daily Life Skills Training for Autistic Children

Wu, Q., Wang, W., Liu, Q., Zhang, R., Pai, Y.S., Billinghurst, M. and Nanayakkara, S.C., 2025. Augmented tabletop interaction as an assistive tool: Tidd’s role in daily life skills training for autistic children. International Journal of Human-Computer Studies, p.103517.

-

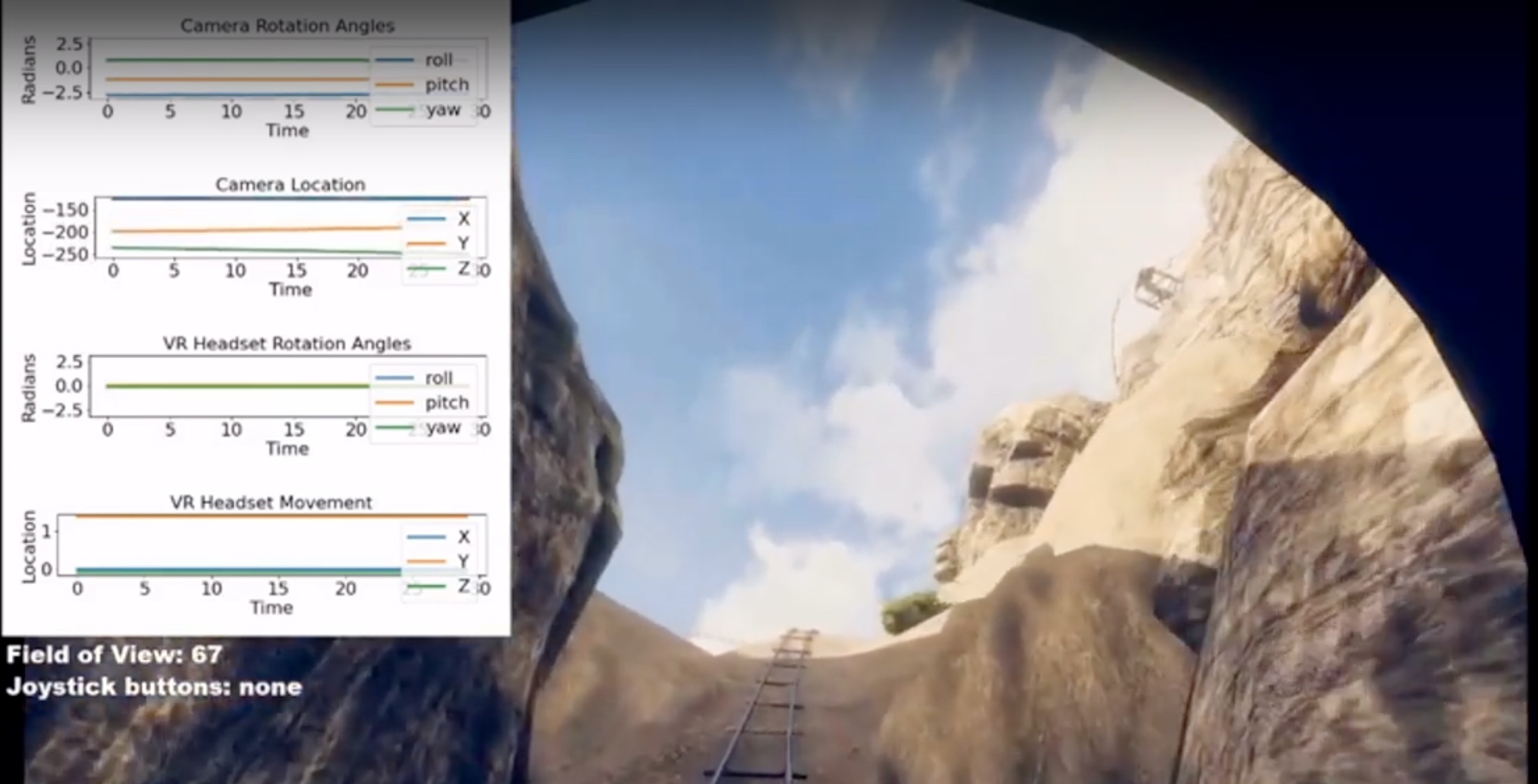

VR.net: A Real-world Dataset for Virtual Reality Motion Sickness Research

Wen, E., Gupta, C., Sasikumar, P., Billinghurst, M., Wilmott, J., Skow, E., Dey, A., Nanayakkara, S.C. VR.net: A Real-world Dataset for Virtual Reality Motion Sickness Research. The 31st IEEE Conference on Virtual Reality and 3D User Interfaces, 2024. Best Paper Award!

-

Early Autism Screening in Children Using Facial Recognition

Wu, Q., Xiao, X., Liu, Y., Billinghurst, M. and Nanayakkara, S.C., 2024, May. Early autism screening in children using facial recognition. In Extended Abstracts of the CHI Conference on Human Factors in Computing Systems (pp. 1-7)

-

A User Study on Sharing Physiological Cues in VR Assembly Tasks

Sasikumar, P., Hajika, R., Gupta, K., Selvan, T., Pai, Y.S., Bai, H., Nanayakkara, S.C., and Billinghurst, M. 2024. Title of the Article. In IEEE Conference on Virtual Reality and 3D User Interfaces, 2024.

-

XRtic: A Prototyping Toolkit for XR Applications using Cloth Deformation

Muthukumarana, S., Nassani, A., Park, N., Steimle, J., Billinghurst, B., and Nanayakkara, S.C., XRtic: A Prototyping Toolkit for XR Applications using Cloth Deformation. In 2022 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), IEEE, 2022.

-

VRhook: A Data Collection Tool for VR Motion Sickness Research

Wen, E., Kaluarachchi, T., Siriwardhana, S., Tang, V., Billinghurst, M., Lindeman, R.W., Yao, R., Lin, J. and Nanayakkara, S.C., 2022. VRhook: A Data Collection Tool for VR Motion Sickness Research. In The 35th Annual ACM Symposium on User Interface Software and Technology (UIST ’22), October 29-November 2, 2022, Bend, OR, USA.

-

Speech Emotion Recognition ‘in the Wild’ Using an Autoencoder

Dissanayake, V., Zhang, H., Billinghurst, M. and Nanayakkara, S.C., 2020. Speech Emotion Recognition ‘in the wild' using an Autoencoder. Proc. Interspeech 2020, pp.526-530.

-

Multimodal Emotion Recognition With Transformer-Based Self Supervised Feature Fusion

Siriwardhana, S., Kaluarachchi, T., Billinghurst, M. and Nanayakkara, S.C., 2020. Multimodal Emotion Recognition With Transformer-Based Self Supervised Feature Fusion. IEEE Access, 8, pp.176274-176285.